The Technical Definition of Dynamic Range

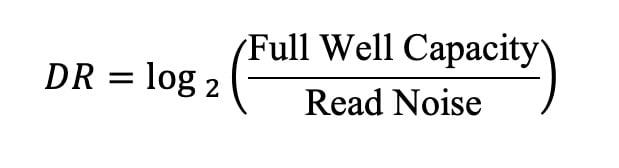

To understand why the industry’s focus on dynamic range has become disproportionate, it is necessary to define what the metric actually represents. At its fundamental level, dynamic range is the ratio between the maximum signal a sensor can record before saturating (known as the full well capacity) and the minimum signal it can distinguish from the noise floor (the read noise). In digital photography, this ratio is expressed in "stops," where each stop represents a doubling or halving of the light.

The mathematical formula for dynamic range is the logarithm (base 2) of the ratio of full well capacity to read noise. This definition highlights a crucial reality: dynamic range is not a single, adjustable setting that engineers can simply increase. It is the result of competing physical constraints. Improving the highlight headroom requires increasing the full well capacity, which is inherently tied to the physical size of the pixel and the structure of the photodiode. Conversely, improving shadow performance requires reducing read noise, a task that eventually encounters the insurmountable limits of physics, such as photon shot noise—the inherent randomness in the arrival of photons.

The Historical Context: The 2012 Revolution

The current obsession with dynamic range is not without historical merit. The industry underwent a genuine technological revolution roughly a decade ago, spearheaded by the release of the Nikon D800 and D800E in 2012, followed closely by the Sony a7R in 2013. These cameras utilized a 36-megapixel sensor that introduced a dramatic reduction in read noise at base ISO. This was achieved through significant advancements in on-chip Analog-to-Digital Converter (ADC) design, which minimized the distance the analog signal had to travel before being digitized, thereby reducing interference and signal degradation.

For photographers, this shift was transformative. It ushered in the era of "ISO-invariant" sensors, where shadows could be lifted by five or more stops in post-processing without the catastrophic introduction of banding or "salt-and-pepper" noise. This elasticity in RAW files allowed landscape photographers to capture high-contrast scenes in a single exposure, effectively rendering the traditional graduated neutral density filter obsolete for many applications. This was a period of rapid, tangible progress where higher dynamic range directly translated to new creative possibilities.

The Data of Stagnation: A Decade of Plateaus

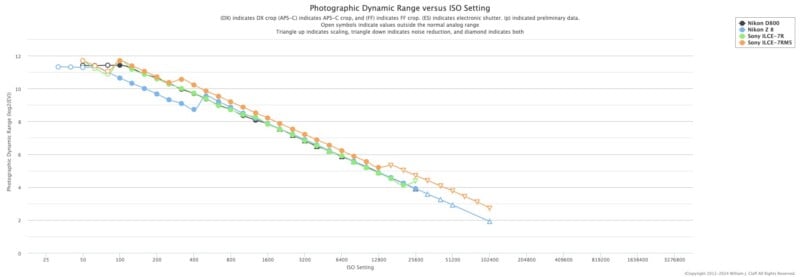

While the leap from 2010-era sensors to 2014-era sensors was monumental, the progress since then has been remarkably incremental. According to data from independent testing sites like Photons to Photos, the measured photographic dynamic range of flagship cameras has remained largely stagnant for nearly ten years.

A comparison between the Nikon D800 (released in 2012) and the Nikon Z8 (released in 2023) reveals that despite a decade of engineering, the base ISO dynamic range remains virtually identical, clustering around 11 to 12 stops of "photographic" dynamic range (a stricter measurement than the theoretical 14+ stops often cited by manufacturers). Similarly, Sony’s a7R series, from the original model to the current a7R V, shows that while resolution and autofocus have seen exponential growth, the fundamental ability of the sensor to record a range of light has reached a physical ceiling.

This plateau is the result of engineers hitting the limits of silicon-based CMOS technology. Read noise in modern sensors is already incredibly low—often less than two electrons. At this level, the primary source of noise in an image is no longer the camera’s electronics, but the light itself (shot noise). Unless there is a fundamental shift in sensor material or architecture, the "DR arms race" is effectively over.

The Misunderstanding of Bit Depth and Usability

A common point of confusion in the dynamic range debate is the role of bit depth, specifically the transition from 14-bit to 16-bit ADCs seen in medium format systems like the Fujifilm GFX series. There is a persistent myth that 16-bit files offer more dynamic range. However, increasing the precision of the analog-to-digital conversion beyond the sensor’s noise floor does not add more information; it simply samples the existing noise more finely.

In practical testing, there is almost no discernible difference in dynamic range between 14-bit and 16-bit modes on modern sensors because the noise floor is already higher than the precision offered by the additional bits. The benefit of higher bit depth is found in smoother tonal gradations and reduced posterization during extreme color grading, rather than the recovery of additional highlight or shadow detail.

Furthermore, "usable" dynamic range is a subjective threshold. While a sensor may be rated for 14 stops in a laboratory, the amount of detail that is aesthetically pleasing to a human viewer is significantly lower. Most photographers require a Signal-to-Noise Ratio (SNR) of at least 3 or 5 for a "clean" image. When these higher standards are applied, the usable range of even the best sensors drops to 9 or 10 stops, which is still more than sufficient for the vast majority of photographic scenarios.

The Output Paradox: Displays and Prints

Perhaps the strongest argument against the worship of dynamic range is the limitation of the output medium. Captured data is useless if it cannot be displayed. A standard sRGB computer monitor or smartphone screen can only reproduce approximately 6 to 8 stops of dynamic range. High-end HDR (High Dynamic Range) displays, such as Apple’s XDR monitors, can push this further, but they rely heavily on aggressive tone mapping to "squeeze" the sensor’s data into a viewable format.

The constraints of print are even more severe. A high-quality inkjet print on matte paper may only achieve a dynamic range of 6 stops, while glossy papers might reach 7. To create a successful image, a photographer must inevitably compress the 12+ stops of captured data into a much narrower range. This process, known as tone mapping, requires artistic judgment. Having "more" dynamic range in the RAW file provides more flexibility in how that compression happens, but it does not inherently make the final image better. In fact, an obsession with preserving every detail in the brightest highlights and deepest shadows often leads to "flat," HDR-style images that lack the contrast and depth necessary for a compelling visual narrative.

Future Frontiers: Computational and Dual-Gain Solutions

While traditional sensor improvements have slowed, the industry is looking toward new methods to expand the perception of dynamic range. One of the most promising areas is Dual Gain Output (DGO) technology, which has long been a staple in high-end cinema cameras like the Arri Alexa and Canon’s Cinema EOS line. DGO sensors sample the pixel’s signal at two different amplification levels simultaneously—one optimized for highlight saturation and the other for shadow noise reduction—and combine them into a single 16-bit file. We are beginning to see this trickle down into stills-focused cameras, such as the Panasonic GH6 and the Sony a7 V.

Additionally, computational photography is poised to bypass physical sensor limits. Features like "Live ND" and Handheld High Res (HHHR) in OM System (formerly Olympus) cameras use multi-shot stacking to average out noise, effectively increasing the dynamic range by several stops through software rather than hardware. These methods mimic the behavior of a much larger sensor or a longer exposure, suggesting that the next "revolution" in dynamic range will be digital and algorithmic rather than purely physical.

Impact and Implications: A Shift in Priority

The continued emphasis on dynamic range as a marketing tool has led to an inversion of photographic priorities. Photographers are increasingly shooting "defensively"—underexposing to protect highlights and relying on the sensor’s recovery capabilities to fix the image later. While this provides a safety net, it often comes at the expense of intentionality and proper lighting technique.

For the professional market, the marginal differences in dynamic range between a Sony, Canon, or Nikon flagship are now negligible for 99% of assignments. The industry’s focus is shifting—and should shift—toward attributes that have a more profound impact on the daily workflow: autofocus reliability, subject tracking, thermal management, and the quality of the glass.

In conclusion, dynamic range is a vital component of the digital imaging pipeline, but it is no longer the bottleneck of creative expression. Modern cameras have reached a level of maturity where the sensor is rarely the limiting factor in a photograph’s success. The gap between what a camera can record and what a photograph actually requires is now wider than ever. Closing that gap is no longer a challenge for engineers; it is a challenge for the photographer’s vision. As the industry moves forward, the "quality" of an image should be judged by its composition, light, and emotional resonance, rather than the number of stops hidden in its shadows.