The emergence of artificial intelligence in the field of digital imaging has sparked a profound debate regarding the boundaries between technological convenience and the preservation of historical integrity. At the center of this controversy is the distinction between "restoration" and "conservation," a dichotomy that has long been debated within the hallowed halls of the world’s most prestigious art institutions. While software developers continue to push the capabilities of generative AI to "fix" aged, damaged, or low-resolution photographs, critics and conservationists argue that these tools often cross a line, moving from the preservation of history to the creation of a sanitized, and ultimately false, reality.

The Philosophical Foundation: Restoration versus Conservation

The roots of this debate can be traced back to the specialized laboratories of Florence, Italy, home to the Opificio delle Pietre Dure. Founded in 1588 by Ferdinando I de’ Medici, the Opificio began as a workshop for semi-precious stone inlay but has evolved into one of the world’s leading institutes for art restoration and conservation. It operates under the Italian Ministry of Cultural Heritage and serves as a primary authority on the scientific maintenance of priceless artifacts.

For the scientists and scholars at the Opificio, the terminology used to describe their work is of paramount importance. While the public often uses the term "restoration," many professionals in the field harbor a distinct preference for "conservation." This preference is not merely a matter of semantics; it represents a fundamental philosophy of respect for the original creator and the historical timeline of the object.

To restore, by definition, is to bring back or reinstate a previous state. In the context of art, this often requires an active intervention where missing elements are recreated. If a fresco has lost a section of pigment due to moisture, a restorer might be tempted to repaint that section. However, the conservationists at the Opificio argue that such actions are inherently invasive. Any addition made by a modern hand, no matter how skilled, is an interpretation rather than an original fact. Conservation, by contrast, focuses on protecting what remains, preventing further decay, and honoring the work as it exists in the present. It is a philosophy of "do no harm," where the goal is to stabilize the artifact without introducing modern fabrications.

The ON1 Restore AI Controversy

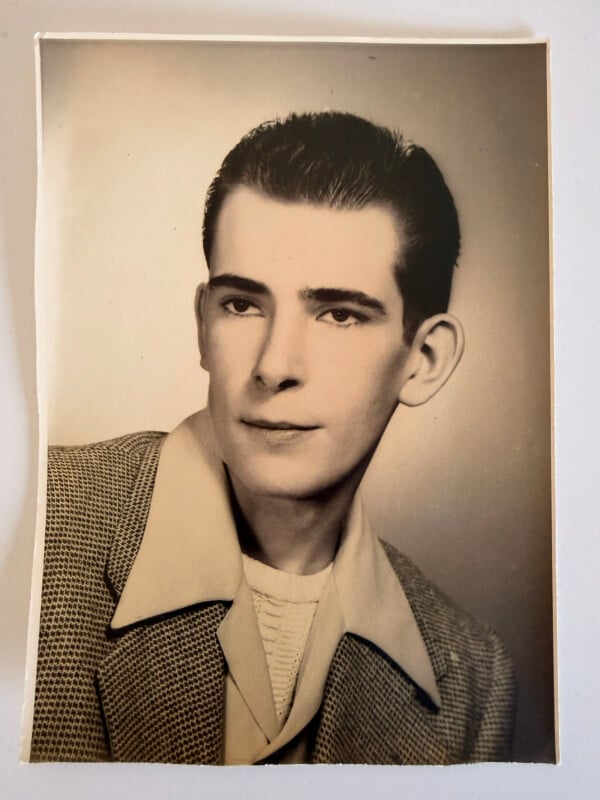

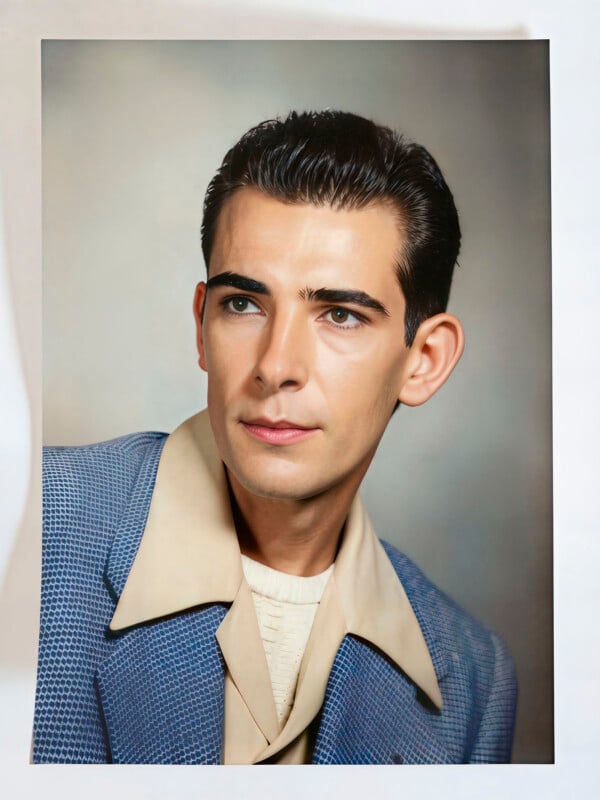

This philosophical tension reached a boiling point in the digital imaging community following the recent showcase of ON1 Restore AI. The software, designed to automate the repair of old family photographs, was marketed as a solution for recovering lost details, removing scratches, and colorizing monochrome images. However, when the results were scrutinized by photography experts and historians, the output was described by some as "grotesque" and "nightmare fuel."

The controversy centered on several key examples provided in the software’s promotional materials. In one instance, a vintage black-and-white portrait of a young man was processed through the AI. The resulting image did more than just remove grain; it fundamentally altered the man’s facial structure, smoothed his skin to an unnatural degree, and "hallucinated" a blue color for his jacket. There was no historical evidence in the original scan to suggest the jacket was blue, yet the AI made a definitive choice, overriding the ambiguity of the original medium.

In another example, a large group portrait from the early 20th century was subjected to the software’s restoration algorithm. While the AI successfully sharpened the blurred faces, it did so by replacing original features with synthetic ones. The individuals in the "restored" photo no longer resembled the people in the original; their identities had been effectively overwritten by the AI’s training data. This phenomenon, often referred to as "identity theft by algorithm," raises significant concerns about the role of photography as a record of human existence.

Technical Analysis: How AI Hallucinates History

The fundamental issue with AI restoration lies in the mechanics of Generative Adversarial Networks (GANs) and diffusion models. Unlike traditional digital repair tools, which might use surrounding pixels to patch a scratch, generative AI "imagines" what should be there based on millions of other images it has seen during its training phase.

When an AI encounters a "soft scan"—a low-resolution or out-of-focus image—it does not actually find hidden details. Instead, it predicts what a human face or a piece of clothing typically looks like and overlays that prediction onto the original file. This process is known as "hallucination." Because the AI is optimized for aesthetic smoothness rather than historical accuracy, it often strips away the unique "imperfections" that constitute a person’s likeness.

Data from the field of computer vision suggests that while AI can achieve high "perceptual quality" (images that look good to the casual observer), it often scores poorly on "fidelity" (how closely the image matches the ground truth). In historical preservation, fidelity is the only metric that truly matters. By prioritizing a clean, modern aesthetic, AI restoration tools risk turning the historical record into a series of deepfakes.

The Response from the Industry

Following the backlash to the Restore AI demonstration, ON1 issued a formal apology to the press. The company stated that the examples included in the initial press kit were inadvertently released and did not represent the intended final output of the software. Furthermore, ON1 clarified that Restore AI was intended to be used as one component of a broader, more nuanced workflow rather than a one-click solution.

"The team inadvertently sent examples in the press kit that weren’t meant to be in there," a spokesperson for ON1 explained. "The Restore AI tool is meant to be part of a broader workflow that allows for user intervention and fine-tuning."

Despite this clarification, critics argue that the core technology remains problematic. Even within a "broader workflow," the underlying engine is still performing the same additive, transformative tasks that conservationists find objectionable. The concern is that as these tools become more accessible to the general public, the "conservation" mindset will be entirely supplanted by a desire for high-definition "restoration," leading to a gradual erosion of visual history.

Chronology of the Digital Restoration Shift

The transition from manual digital editing to automated AI restoration has occurred over a relatively short timeline, complicating the development of ethical guidelines:

- 1990–2010: The Manual Era. Software like Adobe Photoshop becomes the industry standard. Restoration is a painstaking manual process involving the Clone Stamp and Healing Brush tools. The human editor makes all creative decisions.

- 2010–2018: The Algorithmic Era. Content-Aware Fill and basic noise reduction algorithms are introduced. These tools automate repetitive tasks but still rely heavily on the existing data within the image.

- 2019–Present: The Generative Era. The rise of GANs leads to tools like Remini, MyHeritage’s Deep Nostalgia, and Topaz Photo AI. These tools can generate entirely new pixels, colorize images automatically, and even animate still photos.

- 2024: The Ethics Crisis. As AI-generated artifacts become more prevalent, organizations like the Coalition for Content Provenance and Authenticity (C2PA) begin developing metadata standards to distinguish between original captures and AI-altered images.

Broader Impact and the Future of Visual Memory

The implications of AI restoration extend far beyond the hobbies of genealogy enthusiasts. For museums, libraries, and national archives, the integrity of the photographic record is a matter of institutional mandate. If an archive "restores" its collection using generative AI, it may inadvertently introduce biases present in the AI’s training data. For example, AI models have been documented to struggle with the accurate representation of diverse ethnic features, often "whitewashing" or "Europeanizing" faces during the upscaling process.

Furthermore, there is the question of the "aura" of the original object. As philosopher Walter Benjamin argued in his 1935 essay The Work of Art in the Age of Mechanical Reproduction, the "aura" of a work of art is tied to its presence in time and space—its unique existence at the place where it happens to be. A faded, scratched photograph carries the weight of time; it is a physical witness to history. By erasing the effects of time through AI, we risk stripping the object of its historical authority.

As the technology continues to evolve, the industry faces a choice. One path leads toward a future where every family photo is rendered in 4K resolution, regardless of whether the details are real or imagined. The other path, championed by the conservationists of Florence and digital skeptics alike, prioritizes the "broken" truth of the original.

In the final analysis, the goal of preserving history is not to make the past look like the present. It is to ensure that the past remains recognizable as itself. When AI "stole the identity" of the man in the group photo, as critics observed, it did so by prioritizing the machine’s vision over the human reality. For those who value the authenticity of the historical record, the "broken" concept of AI restoration is a reminder that some things, once lost to time, are better left unsaid than falsely recreated.